Why 'Conscious Tech' Is Spiking — And What It Actually Means

AI intimacy, data-sentient environments, and human nervous-system limits have all hit the same inflection point at once. "Conscious tech" isn't a niche — it's the name for what happens when the stack becomes spiritual and political whether we like it or not.

The stack has become spiritual and political whether we like it or not. "Conscious tech" and "conscious technologists" simply name the people willing to take that seriously, on purpose, and in public — right when the world needs it most.

This post explains why right now is the inflection. Not in abstract terms, but in terms of three specific curves that are crossing simultaneously — and what that convergence demands from anyone who designs, governs, or simply uses technology with their eyes open.

Three Curves Are Crossing

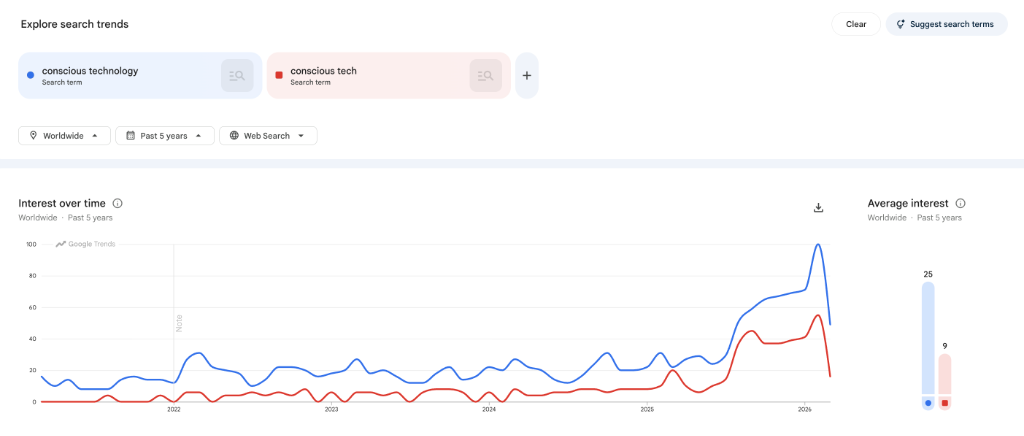

There is a reason the term "conscious technology" is spiking in search trends and showing up in venture theses, policy papers, and conference keynotes that would have ignored it two years ago.

It is not because the term became fashionable. It is because three structural shifts — each significant on its own — reached a point of simultaneous intensity that makes the old frames insufficient.

Curve 1: AI moved from abstraction to intimate co-presence. For decades, AI was background infrastructure — recommendation engines, spam filters, logistics optimization. In the last two to three years, it became a conversational, creative counterpart sitting in everyone's browser. That shift triggered a new phase of the consciousness debate that has moved from academic journals to mainstream discourse.

Curve 2: The built environment became aware and responsive. Our cities, homes, vehicles, and workflows are now sensorized, datafied, and increasingly agentic — systems that don't just collect information but act on it autonomously.

Curve 3: Humans hit cognitive and nervous-system limits. The productivity paradigm that drove the last two decades of software adoption has run into a hard biological wall — and the evidence is now showing up in healthcare data, burnout research, and ADHD diagnoses at population scale.

Each of these is worth understanding on its own. Together, they explain why "conscious technology" isn't a niche interest. It is the emerging default frame for anyone who wants to build, govern, or use technology without contributing to systemic incoherence.

Curve 1: AI Is Now Intimate, Powerful, and a Bit Uncanny

The most visible shift is the simplest to describe: AI is no longer a tool you use. It is a presence you relate to.

When the public begins asking "Is my tool conscious?" — and a meaningful percentage of both AI researchers and the general public believe at least one AI system already has subjective experience — the frame of relationship to technology becomes a first-order design question. Not a philosophical curiosity. A design question.

Philosophers and cognitive scientists are publishing pieces with titles like "There is no such thing as conscious artificial intelligence" — not because the question is settled, but because they are responding to a growing wave of people who believe the opposite. Surveys show 17–20% of AI researchers and the general public think at least one AI system has subjective experience today, with far more considering it plausible in the near future.

Meanwhile, other researchers are assembling evidence for proto-consciousness in frontier models — arguing that the behavioral signatures, at minimum, warrant serious investigation rather than dismissal.

This is not a debate we need to settle here. What matters for practitioners is the implication: when the relationship between human and tool becomes ambiguous, designing that relationship becomes non-optional.

That is precisely where the conscious technologist lives — not in the camp of "AI is conscious" or "AI is not conscious," but in the discipline of treating the relationship itself as a design artifact that requires ongoing, deliberate attention.

Curve 2: The Habitat Itself Has Become "Conscious Tech"

There is a parallel shift happening at the infrastructure layer that most AI discourse misses entirely.

The term "conscious technology" is not only being used to describe AI. One European impact fund defines it as the broader category of technologies that are aware and responsive — phones, wearables, buildings, vehicles, traffic systems — constantly sensing, logging, and acting on human behavior.

Their framing is precise: "Data is increasingly a trigger of automated, instant and lasting consequence… The rise of conscious tech will bring us speed and prosperity — if we build a conscience around it."

In parallel, major financial analysts are structuring 2026 tech trends around four pillars: next-generation mobile networks, next-generation AI (including agentic AI that plans, decides, and executes autonomously), biotech, and sustainability. Agentic AI in particular — systems that don't just respond to prompts but coordinate other systems on your behalf — is reshaping the fundamental dynamic between humans and their environments.

The infrastructure implication is straightforward:

- The built environment is becoming sentient-ish: sensorized, datafied, capable of autonomous action.

- Decisions that once required a human in the loop are being delegated to algorithms and agents.

- The default behavior of your environment is no longer neutral. It has opinions. It takes actions. It makes decisions that have lasting consequences for your life.

"Conscious technology" in this context is not an aesthetic preference. It is a response to the fact that our habitat now has its own default behaviors — and someone needs to decide whether those defaults serve the human or merely serve the system.

Curve 3: Humans Are at Cognitive and Nervous-System Limits

At the individual level, the evidence has moved from academic observation to organizational crisis.

Working memory can hold approximately three to five chunks of information simultaneously. Context switching costs approximately twenty-three minutes to fully recover from. Chronic stress responses from always-on screen exposure are now showing up in productivity data, mental health statistics, and healthcare systems at scale.

The "productivity tools" that were supposed to solve this problem created a new one: cognitive pollution. Too many tabs. Too many tools. Too many notifications. Too many AI assistants, each adding a new stream of coordination overhead without removing an old one.

This is the crisis that Conscious Stack Design was built to address. The language we use — tools as mirrors of subconscious patterns, stacks as geometry, the 1:3:5 Protocol to guard cognitive capacity — maps directly onto what is now showing up in mainstream research on burnout, attention disorders, and technology-related anxiety.

The shift that is happening culturally is significant: the frame is moving from "do more faster" to "protect attention, nervous systems, and meaning." That is exactly the move from classical productivity thinking to what we call cognitive sovereignty — the recognition that your ability to govern your own cognitive environment is the foundational capacity that everything else depends on.

In that context, conscious technologists are the people asking the question that the productivity industry never asked: how do we architect stacks, agents, and environments that regulate rather than dysregulate humans?

The Governance Inflection

When you layer these three curves together, a governance question emerges that was previously considered philosophical but is now operational:

Who decides?

- Who owns the data that drives automated, lasting consequences for your life?

- Who encodes the values in systems that watch and respond to everything?

- Who gets to define alignment — labs, governments, or communities?

The European conscious-tech investors answer explicitly: autonomy, traceable data ownership, and transparent technologies are now core principles — and they frame this as a joint responsibility across business, government, and individuals.

At the same time, the AI policy world is centering AI alignment, AI welfare, catastrophic risk, and democratic input into model behavior — all of which converge on the same question: what is the relationship between systems and the societies they operate within?

This is the same governance layer we are building with cognitive sovereignty, Pingala-style coherence governance, and community-driven stack architecture. The world is catching up to that conversation. Not because we were ahead of our time, but because the structural conditions that make governance non-optional have finally become visible to everyone.

What This Means for You

If you are reading this, you are likely already sensing the convergence — even if you haven't had language for it.

Here is the frame:

The tech inflection. Frontier AI and agentic systems are moving from tools to co-workers and co-decision-makers. That forces us to design the relationship, not just the interface. The Pingala Handshake Protocol is one answer to that design challenge — a structured governance layer that ensures AI capability serves human intent rather than replacing it.

The habitat inflection. Our cities, homes, and workflows are now aware and responsive. Data no longer just informs — it triggers automated consequences. Conscious technologists make sure those consequences are humane and aligned. The 1:3:5 Protocol applies the same constraint geometry at the individual level that governance frameworks apply at the system level.

The human inflection. We have reached hard cognitive and nervous-system limits. Conscious Stack Design gives people and organizations a way to regain coherence, protect attention, and expand capacity without burning out. This is not optional optimization. It is cognitive health practice.

The governance inflection. Questions of agency, consent, and alignment are now board-level and policy-level. Conscious technologists are the bridge between deep tech and deep ethics — the people willing to hold both the engineering and the philosophical threads simultaneously.

The Name for What Is Already Happening

"Conscious tech" is not a brand. It is not a movement someone invented. It is the name for what happens when AI intimacy, data-sentient environments, and human nervous-system limits all hit the same inflection point at once.

The people who take this seriously — who design their relationship with technology deliberately, who build governance into their stacks rather than bolting it on after the fact, who treat their own cognitive environment as something worth architecting — are conscious technologists. The term simply describes what they already do.

The only question is whether you are willing to do it on purpose, in public, right when it matters most.

Start the practice: take the Stack Profile Quiz to see where you stand, read the Conscious Technologist framework, or learn how AI anxiety resolves through architecture.